Contents

[ad_1]

This short article was at first featured in The Dialogue.

Social media consumers posted thoughts about how to guard people’s reproductive privacy when the Supreme Courtroom overturned Roe v. Wade, which include entering “junk” knowledge into apps intended for monitoring menstrual cycles.

Individuals use period tracking apps to predict their up coming time period, communicate to their physician about their cycle and recognize when they are fertile. Users log every little thing from cravings to period flow, and apps provide predictions centered on these inputs. The application predictions support with easy choices, like when to purchase tampons following, and provide everyday living-altering observations, like irrespective of whether you’re expecting.

The argument for distributing junk facts is that undertaking so will journey up the apps’ algorithms, building it hard or difficult for authorities or vigilantes to use the facts to violate people’s privateness. That argument, on the other hand, does not hold h2o.

As researchers who develop and evaluate technologies that assist folks manage their overall health, we evaluate how app corporations accumulate information from their end users to supply helpful expert services. We know that for well known period of time tracking applications, thousands and thousands of individuals would have to have to enter junk details to even nudge the algorithm.

Also, junk info is a variety of “noise,” which is an inherent problem that developers style algorithms to be strong versus. Even if junk details correctly “confused” the algorithm or delivered much too significantly info for authorities to examine, the good results would be limited-lived simply because the application would be less precise for its supposed purpose and folks would quit utilizing it.

In addition, it wouldn’t address present privateness fears due to the fact people’s digital footprints are just about everywhere, from internet queries to cellular phone application use and site tracking. This is why information urging men and women to delete their period tracking applications is well-intentioned but off the mark.

How the applications operate

When you very first open up an app, you input your age, day of your previous period, how long your cycle is and what sort of delivery command you use. Some applications hook up to other applications like bodily exercise trackers. You file suitable details, such as when your time period begins, cramps, discharge regularity, cravings, intercourse drive, sexual action, temper and movement heaviness.

Once you give your facts to the period application company, it is unclear exactly what occurs to it mainly because the algorithms are proprietary and portion of the business design of the firm. Some applications inquire for the user’s cycle duration, which individuals may not know. Certainly, scientists uncovered that 25.3% of people reported that their cycle experienced the oft-cited duration of 28 times on the other hand, only 12.4% actually experienced a 28-working day cycle. So if an application applied the knowledge that you enter to make predictions about you, it may get a several cycles for the app to compute your cycle size and far more correctly predict the phases of your cycle.

An application could make predictions based on all the info the app company has gathered from its people or primarily based on your demographics. For case in point, the app’s algorithm is aware of that a man or woman with a greater human body mass index might have a 36-day cycle. Or it could use a hybrid technique that will make predictions centered on your information but compares it with the company’s significant details set from all its buyers to allow you know what is typical–for instance, that a the greater part of people report having cramps suitable just before their time period.

What publishing junk data accomplishes

If you on a regular basis use a period of time tracking application and give it inaccurate knowledge, the app’s personalised predictions, like when your following period of time will take place, could similarly turn into inaccurate. If your cycle is 28 times and you get started logging that your cycle is now 36 days, the application should modify – even if that new information is false.

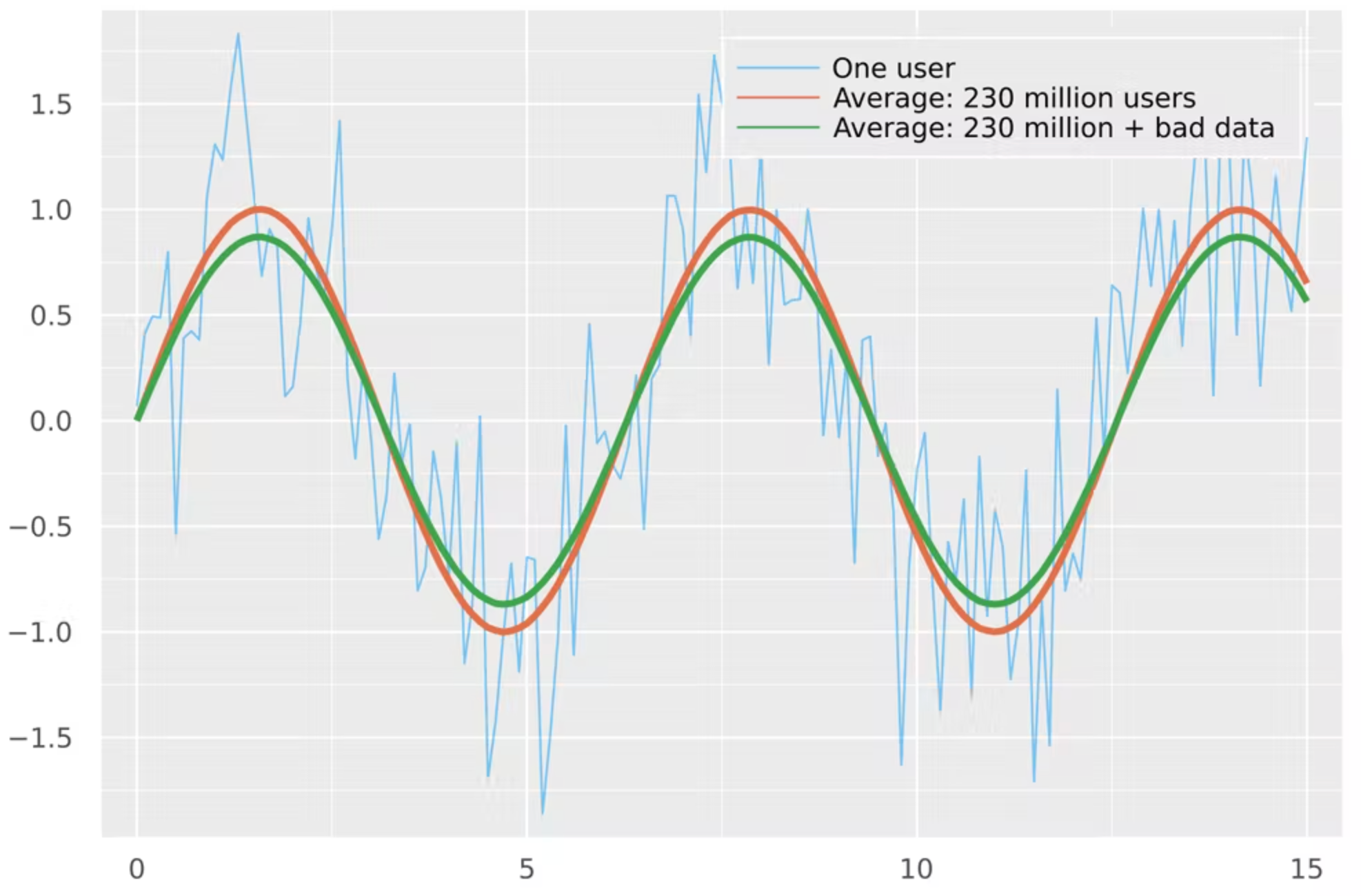

But what about the facts in mixture? The easiest way to mix data from many end users is to ordinary them. For instance, the most well-liked interval monitoring application, Flo, has an estimated 230 million customers. Think about three situations: a solitary user, the common of 230 million users and the ordinary of 230 million end users additionally 3.5 million people distributing junk details.

This easy illustration illustrates 3 complications. Individuals who post junk details are not likely to affect predictions for any particular person app user. It would take an extraordinary volume of operate to change the fundamental signal across the whole inhabitants. And even if this occurred, poisoning the knowledge hazards creating the application worthless for those who have to have it.

Other approaches to guarding privacy

In reaction to people’s concerns about their period of time application facts becoming made use of towards them, some period apps created community statements about producing an anonymous manner, using end-to-conclusion encryption and following European privateness guidelines.

The safety of any “anonymous mode” hinges on what it in fact does. Flo’s statement says that the business will de-detect facts by removing names, e-mail addresses and technological identifiers. Getting rid of names and email addresses is a good start off, but the enterprise doesn’t outline what they indicate by technological identifiers.

With Texas paving the road to legally sue anyone aiding any individual else trying to find an abortion, and 87% of persons in the U.S. identifiable by minimum demographic details like ZIP code, gender and day of delivery, any demographic information or identifier has the likely to damage folks in search of reproductive well being treatment. There is a massive market place for consumer facts, principally for targeted promoting, that makes it achievable to learn a frightening sum about virtually any person in the U.S.

While end-to-conclusion encryption and the European Basic Data Security Regulation (GDPR) can shield your info from legal inquiries, however none of these options aid with the electronic footprints everyone leaves guiding with day to day use of technologies. Even users’ research histories can identify how much along they are in pregnancy.

What do we definitely need to have?

As an alternative of brainstorming approaches to circumvent technology to decrease probable hurt and lawful hassle, we feel that men and women need to advocate for digital privateness protections and limitations of info utilization and sharing. Businesses ought to properly communicate and receive suggestions from persons about how their details is remaining utilised, their risk amount for exposure to probable harm, and the benefit of their data to the corporation.

People today have been concerned about electronic information collection in modern years. Nevertheless, in a write-up-Roe environment, extra individuals can be positioned at lawful risk for doing regular health and fitness monitoring.

Katie Siek is a professor and Chair of Informatics at Indiana University. Alexander L. Hayes is a Ph.D. university student in Wellbeing Informatics at Indiana University. Zaidat Ibrahim is a Ph.D pupil in Overall health Informatics at Indiana University. Katie Siek receives funding from the Nationwide Science Basis. She is affiliated with the Laptop or computer Exploration Association and the Computing Group Consortium.